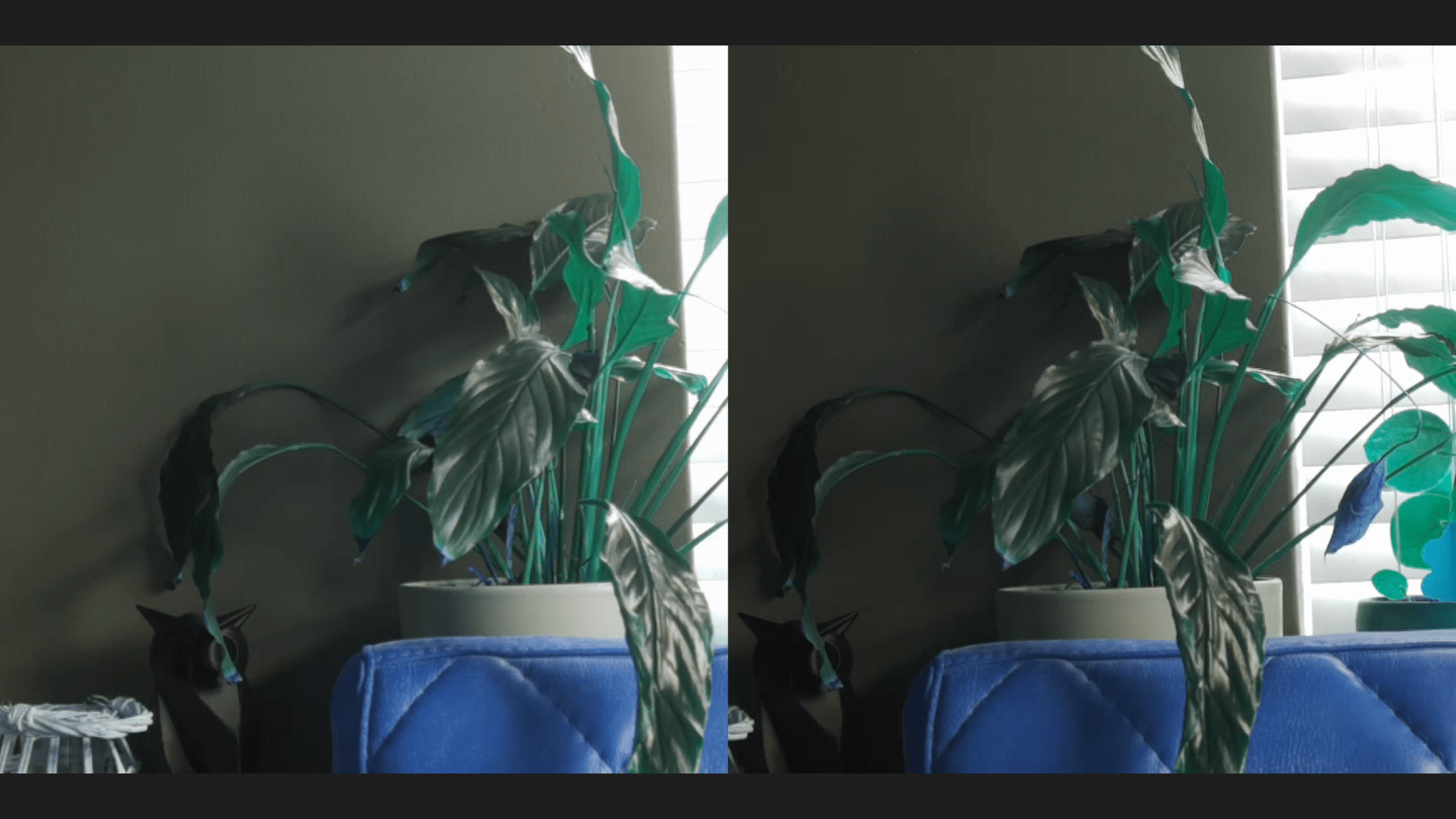

My girlfriend and some of my nerd friends often want to show something on their desktop, or I want to show something thats easily remote viewable.

Sometimes we use screen share in Jitsi for this.

But I wanted a better solution, which is also usable for OBS usage. Or even share my webcams remote.

So I started testing forwarding rtmp/rtsp using apache, portforwarding and nginx.

These where not to my liking … until I found MediaMTX

https://github.com/bluenviron/mediamtx

This is a awesome tool, but configuring is something else.

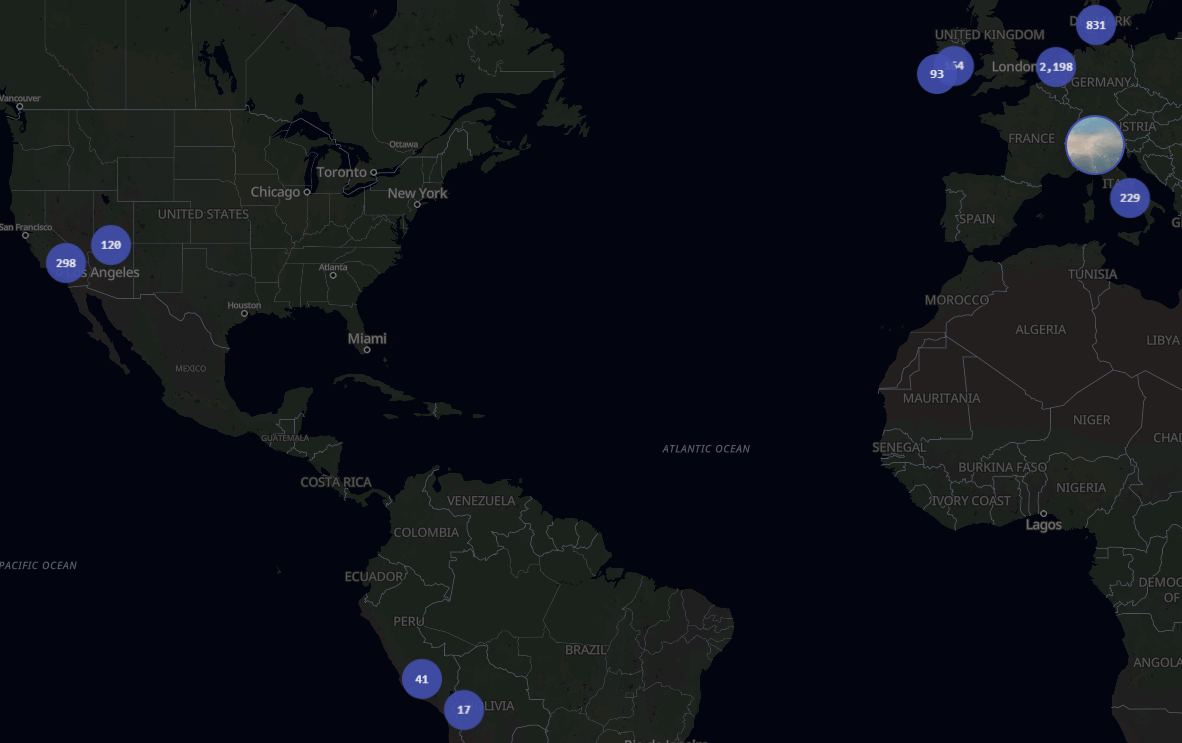

Now I’ve got a setup which can do:

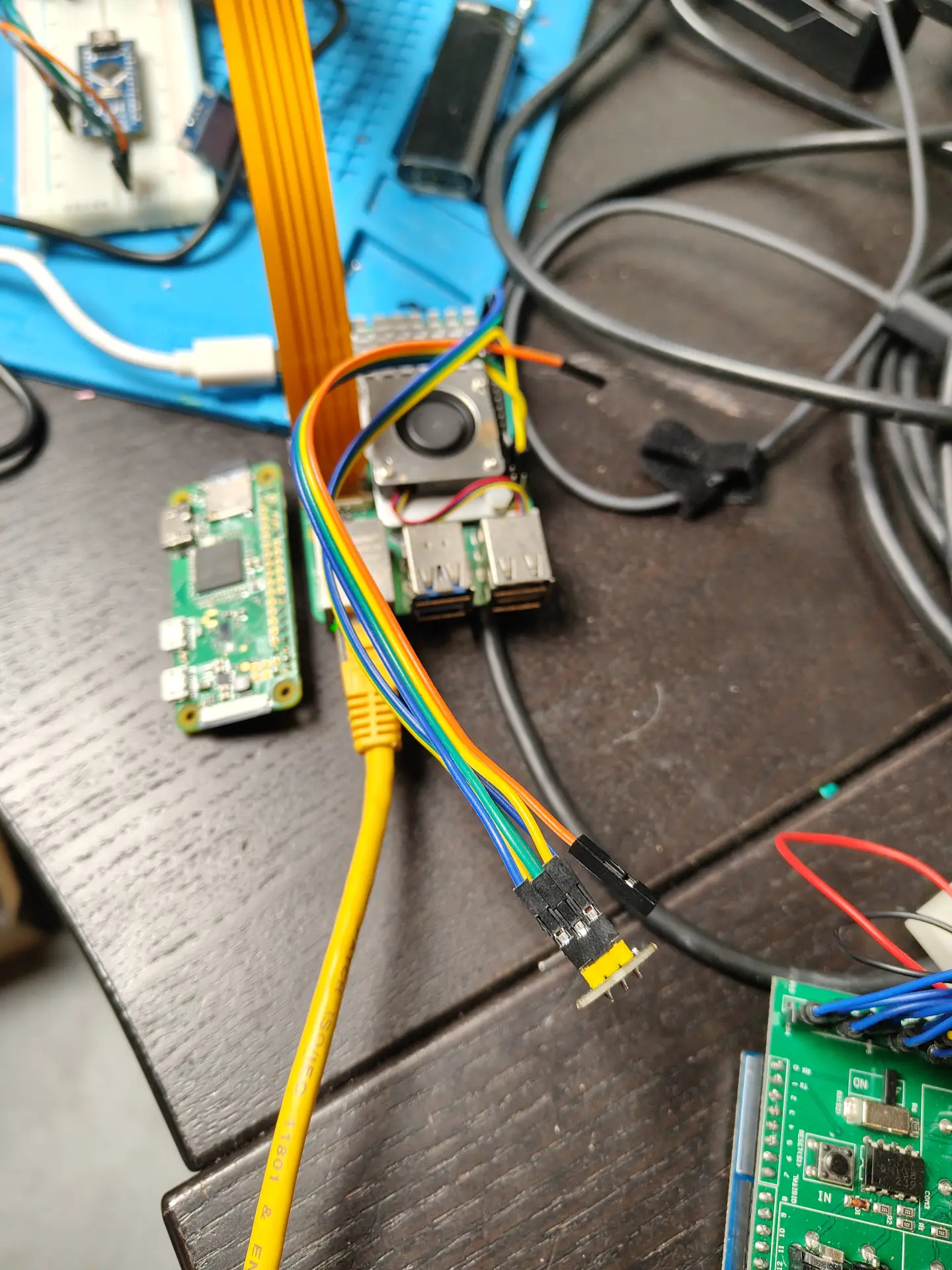

- Forward rtmp streams from my webcams (pull from the camera)

- Publish my OBS screen on a website

- Share browser-tabs, complete browser windows and whole desktop screens using webrtc (With sound)

Works on all browsers supporting webrtc

I used coturn and stun in a previous test, not needed anymore

Mediamtx.yml config

webrtc: yes

webrtcAllowOrigins: ["*"]

webrtcAdditionalHosts: [xyz.henriaanstoot.nl]

webrtcEncryption: yes

webrtcServerCert: server.crt

webrtcServerKey: server.key

webrtcAddress: :8889

webrtcLocalUDPAddress: :8189

paths:

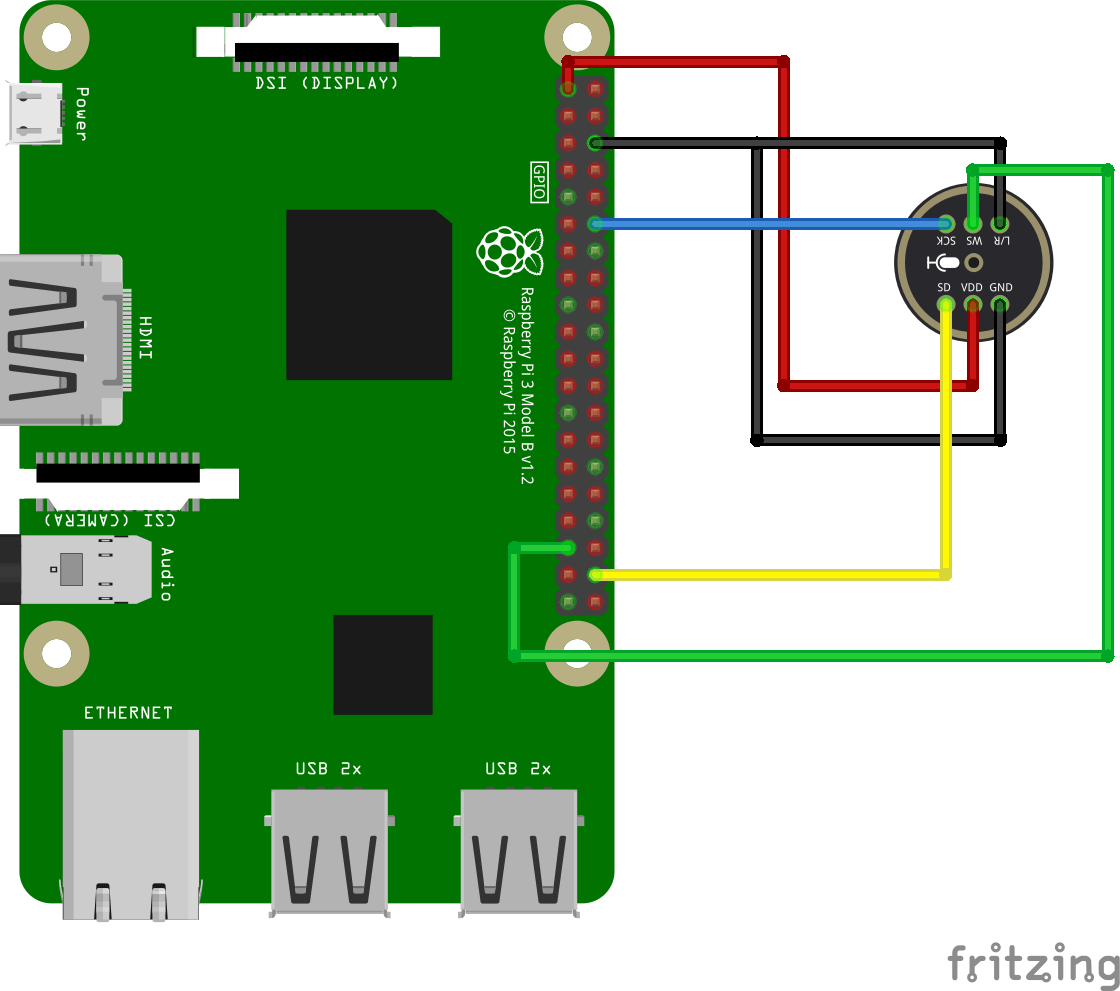

cam:

source: rtsp://admin:adminpass@192.168.178.2/h265Preview_01_main

live/test:

source: publisher

streamme:

runOnInit: ""

server.html

<!DOCTYPE html>

<html>

<body>

<button onclick="startStreaming()">Start Screen Share</button>

<br><br>

<video id="preview" autoplay playsinline muted style="width: 600px;"></video>

<script>

async function startStreaming() {

const pc = new RTCPeerConnection({

iceServers: [

{ urls: "stun:stun.l.google.com:19302" },

]

});

const stream = await navigator.mediaDevices.getDisplayMedia({

video: true,

audio: true

});

const video = document.getElementById("preview");

video.srcObject = stream;

stream.getTracks().forEach(track => pc.addTrack(track, stream));

const offer = await pc.createOffer();

await pc.setLocalDescription(offer);

const res = await fetch("https://xyz.henriaanstoot.nl/streamme/whip", {

method: "POST",

headers: { "Content-Type": "application/sdp" },

body: offer.sdp

});

const answerSDP = await res.text();

await pc.setRemoteDescription({

type: "answer",

sdp: answerSDP

});

}

</script>

</body>

</html>

client.html

<!DOCTYPE html>

<html>

<body>

<h2></h2>

<video id="video" autoplay playsinline controls style="width: 800px;"></video>

<script>

async function startViewing() {

const pc = new RTCPeerConnection({

iceServers: [

{ urls: "stun:stun.l.google.com:19302" },

]

});

const video = document.getElementById("video");

// Receive tracks

pc.ontrack = (event) => {

video.srcObject = event.streams[0];

};

// Create offer (recvonly)

const offer = await pc.createOffer({

offerToReceiveVideo: true,

offerToReceiveAudio: true

});

await pc.setLocalDescription(offer);

// Send to MediaMTX (WHEP endpoint)

const res = await fetch("https://xyz.henriaanstoot.nl/streamme/whep", {

method: "POST",

headers: { "Content-Type": "application/sdp" },

body: offer.sdp

});

const answerSDP = await res.text();

await pc.setRemoteDescription({

type: "answer",

sdp: answerSDP

});

}

// auto start

startViewing();

</script>

</body>

</html>