A dump of my immich experience

Getting lists of filenames from an album.

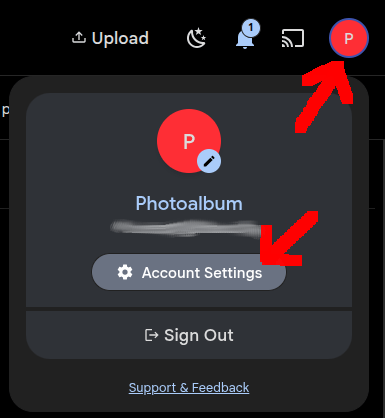

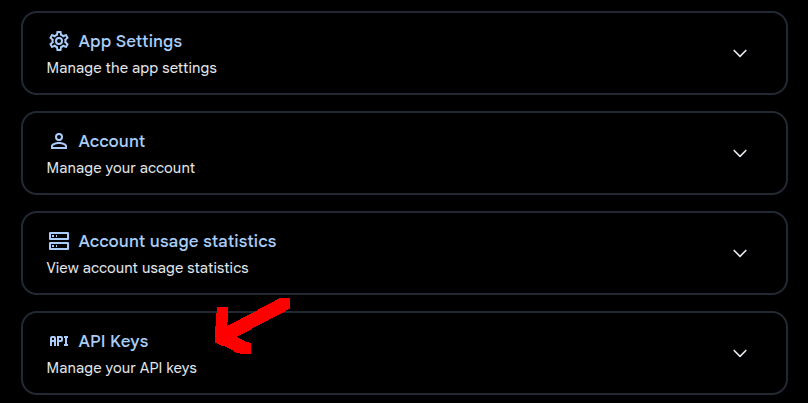

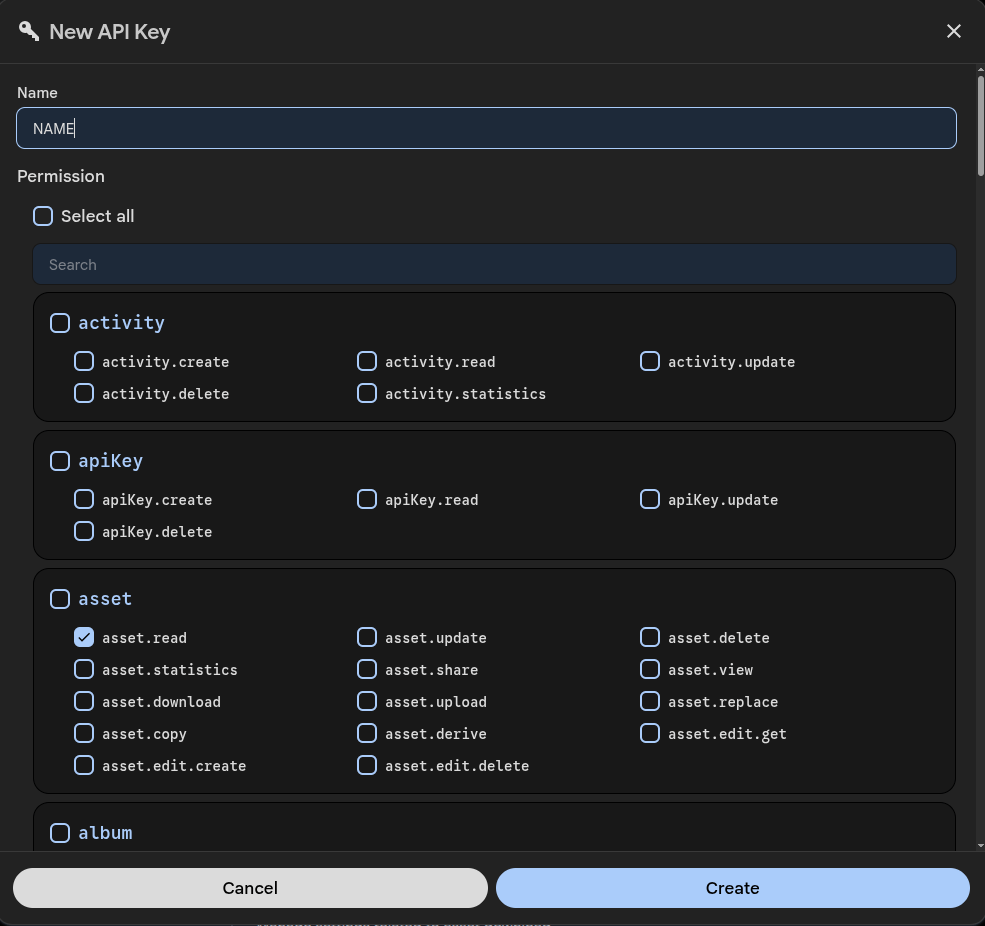

Create an API key from your Immich instance.

NOTE: You will need album.read and asset.read

Then get an ID from an album to get images from.

Open een album in your browser and copy the ID from the URL

Code to get a filelist using Curl

url -s -H "x-api-key: 2Nk4sO4eEm001Cm1Dsnl32UVDEvxxxxxxxxxxxxxxxxx" "https://myphotos.example.com/api/albums/f6a300c2-5027-4c38-a367-xxxxxxxxxxxxxx" | jq -r '. assets[].originalFileName'

Fixing WhatsApp

When ingesting WhatsApp media, the dates in the database will contain the ingest date. This is because the GPS/Date and other exif information are removed from the Media in WhatsApp.

NOTES:

- Always import your camera media first, these will contain all exif info, if you upload WhatsApp media containing the same image it can be skipped. (Look for deduplication tip below)

- WhatsApp autouploaded using the App on your phone rarely needs adjusting. (Taking a photo and uploading it the same day will fix the wrong day issue)

Luckily the WhatsApp media contains the date in the filename.

git clone https://github.com/FlorianKrauseResearch/Immich-Metadata-Update.git

(somewhere on your desktop system/laptop)

Look at installation and usage here: https://github.com/FlorianKrauseResearch/Immich-Metadata-Update

Create a new API key with enough rights!

This software will connect to your immich instance, searches for ingestdates and whatsapp filenames discrepancies.

And wil fix these in the immich database.

I’ve got a directory containing above code for every user, with their own .env file, and custom filters

I’ve edited immich_metadata_update/filters.py

BUILTIN_PATTERNS: dict[str, DatePattern] = {

"whatsapp": DatePattern(

name="WhatsApp",

regex=r"^IMG-(\d{8})-WA\d{4}\.\w+$",

date_format="%Y%m%d",

),

"whatsappvid": DatePattern(

name="WhatsApp",

regex=r"^VID-(\d{8})-WA\d{4}\.\w+$",

date_format="%Y%m%d",

),

"screenshot_basic": DatePattern(

name="Screenshot (basic)",

regex=r"^Screenshot_(\d{8})-\d{6}\.\w+$",

date_format="%Y%m%d",

),

"screenshot_full": DatePattern(

name="Screenshot (with app name)",

regex=r"^Screenshot_(\d{4}-\d{2}-\d{2}).*$",

date_format="%Y-%m-%d",

),

"signal": DatePattern(

name="Signal",

regex=r"^signal-(\d{4}-\d{2}-\d{2})-\d{2}-\d{2}-\d{2}-.*$",

date_format="%Y-%m-%d",

),

}

python3 run.py --preset whatsappvid python3 run.py --preset whatsappvid --apply corrections.json

Incorrect MAP location (0,0 problem, AKA Null Island)

Sometimes media has a incorrect GPS location, or it is missing, or as above set as 0:0

You CAN change the location of Images using the MAP in Immich.

(Select MAP > Day or image > Menu: Change location)

(Also under Utilities)

Immich WILL NOT change your image!, It will write a sidecar file with updated location info.

How I like to fix this:

Download the images for which you want to remove the GPS information.

Delete from Immich.

Run below script over those images to remove Exif information and reupload.

exiftool -gps:all= FILENAME

Loads of the same images

Deduplicate? : Use Utilities > Review duplicates

Select camera instead of WhatsApp image to keep.

(Most of the time bigger and has all exif information!)

Burst photos or simular photos? Use Stacking. This will show only ONE thumbnail in albums/timeline.

Another solution is moving them to Archive!

Uploading using immich-go

https://github.com/simulot/immich-go

./immich-go upload from-folder --server http://192.168.1.2:2283 --api-key GdMq6lZU8Szw6Lc2TXXXXXXXXXXXXXXXXXXXXXX --folder-as-album=FOLDER ~/Pictures/Screenshots/

NOTE: Subdirs become new albums.

Immich Power Tools

https://github.com/immich-power-tools/immich-power-tools

- Manage people data in bulk : Options to update people data in bulk, and with advance filters

- People Merge Suggestion : Option to bulk merge people with suggested faces based on similarity.

- Update Missing Locations : Find assets in your library those are without location and update them with the location of the asset.

- Potential Albums : Find albums that are potential to be created based on the assets and people in your library.

- Analytics : Get analytics on your library like assets over time, exif data, etc.

- Smart Search : Search your library with natural language, supports queries like “show me all my photos from 2024 of “

- Bulk Date Offset : Offset the date of selected assets by a given amount of time. Majorly used to fix the date of assets that are out of sync with the actual date.

PYTHON script to download an album (with a filename filter)

NOTE: At the bottom you can remove the # comments to also REMOVE from immich

import requests

import os

IMMICH_URL = "http://192.168.1.2:2283/api"

API_KEY = "2Nk4sO4eEm001Cm1Dsnl3XXXXXXXXXXXXXXX"

ALBUM_ID = "c4ce0661-0c4c-4c49-b6c1-XXXXXXXXXXXXXXXXXXXXX"

FILENAME_PREFIX = "VID_" # filename filter

HEADERS = {

"x-api-key": API_KEY

}

DOWNLOAD_DIR = "./downloaded"

os.makedirs(DOWNLOAD_DIR, exist_ok=True)

def get_album_assets(album_id):

r = requests.get(

f"{IMMICH_URL}/albums/{album_id}",

headers=HEADERS

)

r.raise_for_status()

return r.json()["assets"]

def filter_assets(assets):

# simulate SQL LIKE 'IMG_2023%'

return [

a for a in assets

if a["originalFileName"].startswith(FILENAME_PREFIX)

]

def download_asset(asset):

asset_id = asset["id"]

filename = asset["originalFileName"]

url = f"{IMMICH_URL}/assets/{asset_id}/original"

r = requests.get(url, headers=HEADERS, stream=True)

r.raise_for_status()

path = os.path.join(DOWNLOAD_DIR, filename)

with open(path, "wb") as f:

for chunk in r.iter_content(8192):

f.write(chunk)

return path

def delete_assets(asset_ids):

r = requests.delete(

f"{IMMICH_URL}/assets",

headers=HEADERS,

json={"ids": asset_ids}

)

r.raise_for_status()

def main():

print("Fetching album assets...")

assets = get_album_assets(ALBUM_ID)

print(f"Total assets in album: {len(assets)}")

print("Filtering by filename...")

filtered = filter_assets(assets)

print(f"Matched assets: {len(filtered)}")

downloaded = []

print("Downloading...")

for asset in filtered:

try:

path = download_asset(asset)

downloaded.append((asset["id"], path))

except Exception as e:

print(f"Download failed: {asset['id']} - {e}")

# VERIFY

print("Verifying...")

if len(downloaded) != len(filtered):

print("Download mismatch. Abort delete.")

return

for _, path in downloaded:

if not os.path.exists(path) or os.path.getsize(path) == 0:

print(f"Invalid file: {path}")

return

print("Verification OK")

# DELETE

ids_to_delete = [asset_id for asset_id, _ in downloaded]

#print("Deleting assets...")

#delete_assets(ids_to_delete)

print("Done!")

if __name__ == "__main__":

main()